Microsoft Copilot

The School has Microsoft Copilot (formerly known as Bing Chat Enterprise) enabled for all staff and students and it's the recommended generative AI tool for all LSE members because of its in-built security and data privacy, such that none of your inputs are ever stored. The way to use it is via the Edge browser and logged in with your LSE email, which will show a label saying ‘protected’ to confirm you’re using the secure work-appropriate interface.

In addition to the security features when logged in with your LSE account, Microsoft Copilot is currently the best choice for general small scale internet fact-finding alongside Perplexity AI, but it’s still built on an LLM meaning the prompt is critical and it is always capable of making errors, meaning everything has to be verified. It uses the Bing search index which comes with some restrictions in terms of what Microsoft has deemed acceptable and reliable websites to search for generic queries, which means it struggles for niche queries (you can however give it any URL you want and it can read the content). As is a common theme for generative AI, this AI-enhanced search engine tool is helpful mainly as a starting point.

Note: Microsoft Copilot is not to be confused with Copilot for Microsoft 365, which is a much more capable paid service ($30 per user per month, not yet available to educational licenses) that allows secure integration of GPT4 technology directly into M365 apps (Outlook, Word, Excel, PowerPoint, Teams) to enhance personal productivity. The tool can see all and only the data the individual user can see within the M365 ecosystem (e.g. your Outlook messages and SharePoint / OneDrive files), and includes advanced functionality like summarising inboxes, past meetings, Excel analysis, PowerPoint slide generation and editing etc. all securely grounded in the user’s data. While this is targeted towards everyday work productivity, it could of course be very valuable for research related work. The same caveats for all generative AI tasks remain when it comes to needing accurate information retrieval from provided data sources: unless the task is very explicitly targeted, the LLM struggles handling finding accurate information when the scale of text it has to search is large.

Chat GPT and Chat GPT Team Plan

Given that Open AI save user data to train their future models for users on basic free plans by default, for anything work related (or that you wouldn't want to share) where MS Copilot isn’t viable, it’s worth considering adopting the Chat GPT Team, similar to their ‘Enterprise’ offering with enhanced security and privacy, but requiring only 2+ users for any team. The Team plan includes all features of Chat GPT Plus including the latest advanced reasoning models (o1), but all data can be deleted entirely after 30 days and the data is moreover not used to train their models. However, because Open AI still stores the data for 30 days in the US, no personally identifiable information should ever be shared without having had a Data Protection Impact Assessment (DPIA) approved first. The Team plan monthly per user cost is $30 (compared to $20 for Chat GPT Plus) or $25 per user per month if purchased on an annual subscription. The additional cost reflects the enhanced security, privacy and usage limits.

LSE DTS has permitted purchase of the Chat GPT Team Plan for academic units as of February 2024 where the usage will never entail personal data. The following is the guidance DTS provide before purchasing:

“Each AI provider has its own terms of service, security model, and end user agreements. It’s important to read through these so you understand what any AI service will do with your data, where your data will be stored, and if it can potentially be reused or regurgitated by other users of the service. You need to read these terms of service carefully before proceeding to use an AI service – if you need further help, please talk to the cyber security team via dts.cyber.security.and.risk@lse.ac.uk

In particular, please be aware:

It is highly inadvisable to prompt an AI service with any confidential or personal information.

It is unwise to trust the results as correct, without performing separate verification.

AI is prone to ‘hallucinations’ and may make up data it does not have, or otherwise refer to information inconsistently or incorrectly.

Data may be stored in the US or jurisdictions that do not meet the required standards of UK GDPR, so if any research data is input into it check that doing so wouldn't breach any research data agreement.

Remember that with most AI services there aren't any guarantees of service. ”

Other LLMs

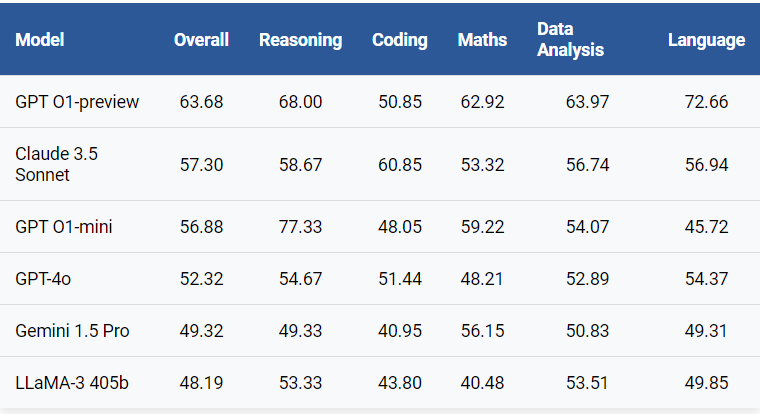

Below is the latest leaderboard from LiveBench from September 2024, a private (and therefore uncontaminated, meaning models won't have been trained on the questions) benchmark with challenging questions across various categories.

AI tools dedicated to literature-related research

The common theme for popular commercial AI applications that explicitly pitch themselves towards ‘research’ is that they integrate LLM technology with search engine technology to accelerate and, to a limited extent, enhance literature searching and (very limited) reviewing. This reflects the misleading lay perception of research which is often thought of essentially just ‘reading’. There are some literature mapping tools (like Research Rabbit (free), SciSpace (free + paid), Semantic Scholar (free) and Connected Papers (free + paid)) that don’t use LLMs (but use limited semantic NLP) to enhance searches but are nonetheless very useful for building up literature collections. In fact they are arguably better for systematic reviews where time saving is less important than comprehensive coverage.

The most popular tools that use advanced generative AI to support literature review are Elicit, Scite AI and, to a lesser extent (as it’s designed for global searching not just scholarly databases), Perplexity AI. As with any software application, while they fundamentally perform similar functions they each have distinct value propositions and interfaces that will be more useful for some researchers in some contexts. Elicit is perhaps the best starting point to develop not only a collection of relevant papers but succinct AI-generated summaries, key findings and limitations. Scite’s main value is in using a form of sentiment analysis to classify paper citations that either support, contrast or merely mention the source paper, which can speed up evaluating papers as part of the review process. Perplexity is less explicitly dedicated to scholarly research but is extraordinarily fast and useful for finding any live information on the web, along with enabling a continual dialogue based on its findings.

As with all generative AI based on LLMs, any generated content must be verified independently as their non-deterministic outputs naturally create ‘hallucinations’, and this can happen even when it looks like the tool is citing a direct source. Scite extracts direct quotes for individual papers rather than producing a generative AI summary, which for most academics may be more helpful. At most, these tools can help speed up identifying relevant scholarly resources with snapshot key information summaries, but they do not even begin to substitute engaged direct reading. In fact one of the biggest risks as AI quality improves is researchers outsourcing the ‘reading’ and simply accepting summaries as representative, which not only loses context and therefore information, but risks eroding cognitive capabilities that can only remain sharp with continued engagement.

Elicit can help speed up exploratory (but not systematic) literature reviews thanks to using advanced AI semantic search to identify relevant papers without the need for comprehensive or exact keywords. Like any tool that relies on searching data, it is limited by what it can access, and Elicit cannot access scholarly work behind a paywall, which includes many books.

It also includes LLM functionality to extract key information and/or summarise from retrieved articles as well as PDFs the user uploads and present in a table, columns include the title, abstract summary, main findings, limitations etc. As with any LLM, results of such summaries or information retrieval cannot be relied upon and should only be seen as a starting point. The time saving may well be worth it at least for exploring a new research area compared to brute force keyword searching in Google Scholar.

As of 2024, Elicit comes with a limited free option which doesn’t include paper summarisation or exporting. The paid subscription includes up to 8 paper summaries.

Scite is similar to Elicit in terms of LLM-enhanced semantic searching to identify papers (again, limited to the scholarly databases it has access to). While it uses LLM technology to summarise a collection of papers from the search results, rather than being able to summarise key findings, limitations etc. like Elicit, it provides a view with short direct quotations from the paper along with direct links to the paper itself. In some cases this may be more useful than a potentially flawed LLM summary.

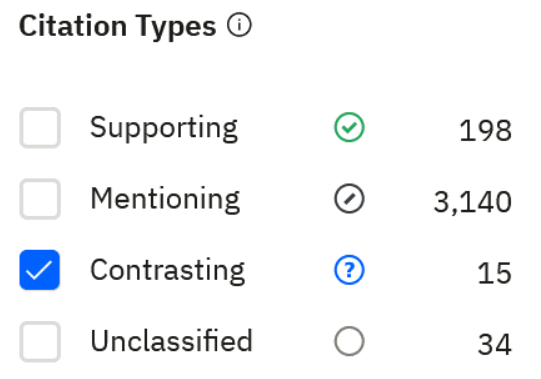

The main value offering of Scite is that it incorporates a limited evaluation of the nature of citation statements, to help a researcher get a quicker feel for the extent to which authors who cite a particular paper are supporting or critiquing it:

In reality, the overwhelming majority of citations are neutral and so the benefit is marginal – again, it could well be worth it to help accelerate discovery.

As with Elicit, the value is far higher when exploring a new research area to speed up a researcher’s engagement with the literature. Identifying contrasting citations in particular can be extremely helpful to accelerate critical engagement with past studies or authors. Also, as always this is a useful starting point for a researcher to delve deeper into any other paper to engage with it directly. Even imagining a future with AI brain implants, it’s difficult to imagine how any human can actually engage with academic concepts and research effectively without cognitive effort. But a tool like Elicit can certainly help speed up identification of useful avenues to direct that intensive cognitive effort.

Scite does not offer a free version but it does offer a free trial for 7 days, after which it’s paid.

While Perplexity can be used to search scholarly content, it’s a more ambitious platform than Elicit and Scite in that it incorporates web browsing as well as academic research articles and has a built-in chat interface to interrogate and follow up, much like Chat GPT. The paid subscription allows you to choose between language models and that includes GPT4 and the latest Claude models which immediately makes it more valuable if quality of language and reasoning is important (rather than just searching and ranking for instance). It’s also extraordinarily fast at returning and summarising information gained through searching the web. In order to be fast it sacrifices comprehensiveness and search results can be disappointing for scholarly literature reviews compared to Elicit or Scite. The paid version is at the time of writing superior to Chat GPT Plus’ or MS Copilot’s browsing functionality for purely finding and conversing about live information found on the web; some consider that fact finding value to be more important and prefer paid Perplexity over paid Chat GPT or Claude even though the latter offer more versatility and longer context constraints.